What do people mean by ‘Artificial Intelligence’?

This personal essay by Conrad Taylor explores some background concepts and distinctions, which we did not have time enough to explore in the NetIKX meeting of 26 August 2018, and shows how the field of ‘Artificial Intelligence’ research has sought to progress beyond simple algorithmic processing, to create machines which can learn from their data environment and reprogram themselves.

The NetIKX meeting of 26 August 2018 was billed as being on the topic of ‘Machines and Morality: Can AI be Ethical?’. Our speakers addressed many important issues, about how computers are used, but not that question.

In a number of short stories, later collected as ‘I, Robot’, and in a series of three detective novels which pair New York detective Elijah Bailey with a robot partner, R. Daneel Olivaw, author Isaac Asimov worked through a series of thought experiments, in which sentient robots have to reconcile their experiences in the world with the Three Laws of Robotics implanted in their ‘positronic brains’ to prevent them turning on humans. These (see below) were first expressed as a group in the short story ‘Runaround’ (1942).

The Three Laws of Robotics (Asimov)

1. A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2. A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

3. A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

For one thing, the ethical focus was on the responsibilities of humans in how they commission, program, train and deploy automated algorithmic decision processes. At no point was the ethical capacity of machines addressed.

That can be said to be to the speakers’ credit, because although the ethical capacity of artificial humans has been a frequent theme of science fiction since at least Mary Shelley’s ‘Frankenstein’ — and explored entertainingly and fruitfully by Isaac Asimov in his ‘I, Robot’ short stories and ‘Caves of Steel’ trilogy — there seems no evidence that machinery is anywhere nearing a moral sense. When an autonomous robot vehicle kills someone, it will not be the car that appears in the dock. But will it be the owner? The company? The programmer?

I felt more disappointed that no clear definition was offered of ‘artificial intelligence’, missing an educational opportunity. Tamara Ansons treated AI and neural networks as if they mean the same thing, which historically they do not; while Stephanie Mathison focused on the use of algorithmic processing to guide decision-making, which is something else again.

Given a short span of time available that afternoon, doing a ‘deep dive’ analysis probably would not have worked for NetIKX. However, to satisfy my own curiosity, I decided to read further, unpick the various meanings of AI as they have evolved historically, and set out my understandings. I found the AI field generally to be convoluted and many-stranded; to have taken broadly three major tacks since the 1950s; and to challenge easy understanding. Apologies, then, if my own attempt at an account is less of a ‘deep dive’ than a snorkel exploration near the surface.

Of algorithms and automata

Al-Khwarizmi the Persian mathematician, as imagined for a Soviet-era postage stamp.

A central theme of our meeting was algorithms. As both Tamara and Stephanie pointed out, an algorithm is a set of instructions, translated into a stepwise sequence of actions, which gets something done: a baking recipe is a simple and everyday example.

The word ‘algorithm’ itself derives from the name of the ninth century Persian mathematician Muhammad ibn-Musa al-Khwarizmi, who as well as popularising place-value arithmetic with Hindu numerals, was the first to set out stepwise procedures for solving linear and quadratic equations, thus founding the branch of mathematics we call ‘algebra’.

It was not then possible for algorithms to be executed by machines. Only a human being could solve an equation – or for that matter tie shoelaces, bake a loaf, or weave a basket. However, ingenuity had previously been applied to automating certain tasks. I recently realised that an early example is the evolution, some seven thousand years ago, of the hand-loom for weaving cloth, with its mechanical array of ‘heddles’ used to raise and lower alternating warp threads so that the shuttle could easily pass the weft between them.

With increased skill in making machinery in the ancient Greek world, plus intellectually curiosity, came the invention of various kinds of automata – the word means ‘things which act of their own will’. However, this label was a misnomer, as what these devices did was use physical mechanisms to execute a stepwise pattern of actions ‘programmed’ by the inventor. One notable early practitioner was Ktesibos, first head of the Great Library of Alexandria, who devised amongst other curiosities a mechanical model owl, powered by a flow of water, which acted out bird-like movements. (There were parallel developments in China.)

The Greek imagination was also stimulated in the direction of what we might consider the first ‘science fiction robots’, such as the mythical bronze warrior Talos – said to have been created by Hephaistos, smith to the Olympian gods.

Algorithmically controlled machines

A Jacquard loom for weaving patterned fabric, featuring the chain of punched cards which ‘program’ the operation of the loom’s heddles.

If we recalibrate the word ‘automaton’ so that it no longer implies having a will of its own, we see that automata are now indispensable in modern industry. Again, the business of weaving led the way in the 18th century, culminating in the Jacquard loom which, controlled by a continuous chain of punched cards to control the heddles, wove complex repeated fabric patterns. This was an important step along the way to computational machinery, inspiring Charles Babbage and Lady Ada, and we can think of the sequence of cards as an algorithmic program cast into physical form.

Numerical Control was a trend in the operation of machine tools, starting just after World War Two, first using punched tape, and later software in electronic computers, to control lathes and milling machines, welding and sewing operations, etc. Today’s production-line industrial robots are descended from this tradition, with hardware generalised to be able to perform a broader set of tasks, and with programs which can be rapidly swapped in to direct a wide variety of operations.

In essence a 3-D printer, even a desktop inkjet printer, are animals of the same type. But I hope it is obvious that just because a process is algorithmically controlled does not imply the least iota of ‘artificial intelligence’.

Essentially this is not altered when an algorithmically controlled system operates in computational space only. The example cited by Stephanie – the Washington DC program used to rank teacher performance on the basis of the test scores of students from one year to the next – is of this type. Any ‘intelligence’ (or indeed stupidity) is not attributable to the machine, but to the people who create the software, and who blindly act on its recommendations.

Cybernetics and feedback

So far we have considered only machine algorithms that ‘feed forward’ relentlessly like a player-piano. Things get more interesting when feedback loops are introduced. An early and famous example was James Watt’s ‘governor’ mechanism for engines, which responded to excessive speed by throttling back the steam supply. A bimetallic-strip thermostat is another easy to understand feedback mechanism that reacts to environmental conditions, as is the ballcock in a toilet cistern.

Cybernetics (from the Greek word κυβερνήτης for a helmsman) is the name of the cross-disciplinary field which studies regulatory systems, not only mechanical, but also in animals and in social systems.

Although the term ‘cybernetics’ is hardly used these days, computer programs have vastly extended the scope of machine interactions with the environment. An ATM, for example, has multiple input steps – it reads your card’s chip, asks for and processes your PIN, asks how much cash you want to take out. As for your word processor, it is constantly scanning to see what key you will press next, or what menu command you will invoke.

Here, the algorithmic system is made more complex by the loops in the software which scan for input and use if–then–else loops to evaluate and act accordingly.

Interactive algorithmic software is very complex – perhaps nobody on the engineering team fully understands what is going on beneath the hood. However, it is in principle knowable, and also, it is incapable of autonomous ‘thought’. The systems critiqued in Cathy O’Neil’s Weapons of Math Destruction are all of this type: they are not artificial intelligences.

The quest for ‘artificial intelligence’

The intellectual background to the postwar vision of creating a machine which could replicate human intelligence, has surprisingly deep roots in the philosophical and mathematical thinking of Ramon Llull, René Decartes, Thomas Hobbes, Gottfried Liebnitz and others, who shared a tradition of seeing formal logic as the epitome of thought, and considered that all rational decision-making could in principle be reduced to logical operations.

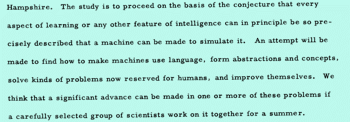

The historic Dartmouth College proposal document which started the discipline of artificial intelligence studies is available online as a scanned typescript.

From the 1930s, neurology demonstrated that the brain was an electrical network of neurons, communicating by firing all-or-nothing pulses across the synapses; contemporaneously, Claude Shannon’s information theory described all-or-nothing digital signals; and Alan Turing’s theory of computation showed that any mathematical operation could be done digitally, with ones and zeros. The confluence of these ideas suggested in the late 1940s that an artificial brain could be made.

The term ‘artificial intelligence’ itself was coined in 1956 to advertise a summer brainstorming workshop at Dartmouth College, which can be said to have founded the subject as an academic field of study. It was organised by mathematician John McCarthy; amongst those who attended were Claude Shannon and Marvin Minsky. The proposal for the workshop offered this definition:

‘The [summer school] study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it. An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves.’

(The typed proposal is online as a scanned document at http://raysolomonoff.com/dartmouth/boxa/dart564props.pdf)

Thus it can be seen that at the outset, the AI enthusiasts seemed to have a rather reductionist view of what human intelligence is, and great optimism about the speed at which a machine version of it might be achieved.

Early hype and hubris about AI

Early progress in getting machines to play games, solve algebraic problems etc. encouraged a great deal of optimism, and enthusiasts talked up the field, claiming that fully intelligent machines were only two decades away, or less. Consider Marvin Minsky’s prediction in Life magazine (1970) – ‘In from three to eight years we will have a machine with the general intelligence of an average human being’. Large grants were made available from government sources such as the US Defense Advanced Research Projects Agency (DARPA).

However, any attempt to extend the scale of problems to be tackled by machines soon ran up against the technical limits of the time. Ross Quillian’s promising early work in natural language processing, based on semantic net techniques, used a vocabulary of only 20 words – all that could fit into computer memory back then. The approach of exploring a maze of possible answers starting from a set of known premises, called ‘reasoning as search’, also rapidly smashed into memory and processing constraints: as the maze of possible solutions deepened and thickened, the exponential ‘combinatorial explosion’ of terms overwhelmed the available machinery.

This first wave of AI research ended in what has been called the first ‘AI Winter’ as, disappointed by stalled progress, important funding bodies in the US and UK terminated their support in the late 60s and early 70s.

However, some useful experimental foundations had been laid. One approach that has evolved to become of great current interest was Rosenblatt’s ‘perceptron’, a trainable algorithm used for yes/no classification decisions, initially tested and tuned on low-resolution image-recognition tasks.

1980s: ‘expert systems’ and knowledge

Artificial Intelligence research, even if after the first AI Winter it ‘dared not speak its name’ for fear of ridicule, revived and set new directions in the 1980s. This period should be of great interest for us in the information and knowledge management space, because so-called ‘expert systems’ were an attempt to replicate the decision-making abilities of human experts in various narrowly-defined fields of expertise. While these fields of application might be considered niche, they were practical enough to attract huge amounts of corporate funding for the first time.

Edward Feigenbaum expressed the new paradigm when he declared that ‘intelligent systems derive their power from the knowledge they possess rather than from the specific formalisms and inference schemes they use.’

An expert system consists of two parts: an inference engine and a knowledge base. The inference engine is an automated reasoning system, with Lisp and Prolog being popular AI software development environments at the time, while the knowledge base is a formalisation of facts which have been gathered from human experts. In fact the biggest barrier faced by all these knowledge-based systems has been and continues to be the knowledge acquisition problem, which is determined by the relative scarcity of domain experts who have the time and are willing to contribute their knowledge to the project.

Biomedical applications were amongst the first use-cases, such as MYCIN (written in the 1970s in Lisp) which could identify bacteria causing severe infections, using a fairly simple inference engine operating with a knowledge base of about 600 rules.

MYCIN was never implemented in medical practice for a number of reasons including medical ethics concerns. But other expert systems proved very successful. For example, in the early 1980s the Digital Equipment Corporation used the SID system, again written in Lisp, to apply a set of rules created by expert computer-logic chip designers. It went on to specify 93% of the logic gates in the CPUs of the VAX 9000 family of supercomputers.

Although the term ‘expert system’ has fallen out of use, it represents the first wave of practical outcomes from AI, and the approach has permeated into important methods used in contemporary knowledge organisation, such as Linked Data, and ontologies like OWL as used in Semantic Web applications. These approaches model knowledge in explicitly coded ways that makes it possible for machines to process it usefully (which does not mean that they understand it).

Success and sublimation

During the 1990s and into the millennium, the field of ‘artificial intelligence’ seemed to evaporate, paradoxically just as some of its projects were flourishing and becoming mainstream, for example in speech recognition, natural language processing, medical diagnosis, data mining and automated financial trading. Behind this we may note several factors:

- developments were happening inside software companies with commercial goals in mind, more than in universities; therefore, developments were closely guarded secrets and not published in the technical literature;

- because the term ‘AI’ had become associated with wild sci-fi ideas, it was more prudent to call these projects something else;

- it was now possible to train systems with very large amounts of data – not just stored locally, but across networks and the Web – and computing power had evolved to cope.

One such application which touched the lives of billions was Google’s search engine, and its algorithms devised to determine a ranking of relevance by examining links between pages.

It has been wittily observed that as soon as AI research manages to solve a formerly intractable problem, such as Optical Character Recognition, it is no longer considered ‘AI’.

The Deep Learning revolution

Since the inception of AI research, one of the key goals has been to create machines which do not simply process a predetermined cluster of algorithms, however sophisticated, but actually ‘learn on the job’ without relying on a human-supervised training programme and a carefully selected training set. What has caught the imagination of the technical and business communities in the last 15 years, is a set of technologies that appear to be capable of just that, delivering concrete benefits in the process.

The perceptron, again. The roots of this technology can be traced to Frank Rosenblatt’s 1950s work on the ‘perceptron’. This was an example of the simplest kind of neural network described by Tamara in her NetIKX talk, a ‘linear threshold unit’, in which a layer of inputs is processed directly and fed in a forwards-only direction to the output layers. A training process adjusts the ‘weighting’ or significance attributed to each input, to reach the yes-or-no threshold for a classificatory conclusion.

One example of such a function that is central to image processing and machine vision is ‘edge detection’, where a high contrast in the brightness values between adjacent pixels is assumed to indicate an edge in the real world.

Adding subtlety and more layers. We have already noted that early neural network experiments were hampered by the computational limits of the time. However, there were also problems with the design of these neural net systems. This is where decades of research have made a difference. One example was an elaboration beyond the ‘yes–no’ kind of output, to one outputting a graduated range of output values, which because of its S-shaped plot on a graph is often called a sigmoid function.

Improvements were gained by interposing multiple computational layers between input and output, as Tamara briefly described. These are often described as ‘hidden layers’ and it is what makes deep machine learning systems ‘deep’.

Novel rules and structures for neural nets

One successful model of deep neural net is the convolutional neural network, inspired by work on the animal visual cortex. CNNs are feed-forward networks with many hidden layers, accelerated by having each stack of artificial neurons processing (at least initially) only a small sub-set of the total field of inputs, working massively in parallel. Image and video data is naturally segmentable, and CNNs excel in image/video recognition tasks. CNNs can ‘learn’ to recognise patterns in the data without much prior training.

Deep learning architectures were initially used in feed-forward cascades, but later incorporated ‘back-propagation’ techniques which compare the actual output values to the desired or expected values, adjusting the weighting algorithms back through the neural layers to optimise the results over time. Back-propagation typically relies on sigmoid-function output values, because these allow greater subtlety in adjusting the weightings.

A persistent problem in training neural nets was identified in 1991 as the ‘vanishing gradient problem’. This occurs when an error value, backpropagated to optimise the accuracy of performance, is so very small that it cannot adjust the weighting in the algorithms in current use, such that further learning cannot occur.

Time and memory. There is a broad class of neural networks called recurrent neural networks (RNNs) which use their internal state (memory) to process sequences of inputs, one after another. Bringing in the time dimension has made RNNs very successful at tasks such as the recognition of speech and joined-up handwriting.

Amongst the various flavours of RNN, remarkable progress has been made by the long short-term memory (LSTM) model, proposed in 1997 by Sepp Hochreiter and Jürgen Schmidthuber. In a LSTM neural network, the units (artificial neurons) have not only a computational cell, an input gate and an output gate, but also a ‘forget’ gate. When the cell receives an input, it can hold onto it for a very large number of cycles in local memory, until instructed to forget it. LSTM networks have been successful in overcoming the vanishing gradient problem, and have superior performance in processing time-series data.

Google, Apple and Amazon now use LSTM for important logical components of consumer electronics: Google use it for speech recognition in Android, and for Google Translate; Apple use it for iPhone ‘Quicktype’ and for Siri; Amazon use it for Alexa. It has also been used for phoneme recognition, semantic parsing of natural language input, handwriting recognition and robot control.

Exploiting the power of cheap GPU chips

It’s easy to think of the synaptic links between neurons in the brain and imagine that artificial neurons are ‘wired up’ the same physical way, but they are in fact implemented in software, running on fairly standard computer chips.

In the early 2000s a new class of processor was invented to meet the growing demand for fast processing of image data, such as rendering smooth moving images of virtual worlds in computer gaming. These chips offload this task from the computer’s Central Processing Unit (CPU), and are known as graphics processing units (GPUs). Nvidia is a prominent manufacturer.

GPUs are, naturally enough, optimised to process video data, which is a massively parallel operation. But that parallel-processing capability can also be exploited for a wider range of tasks, by various neural network architectures. Tasks can also be divided over multiple GPUs, and then combined.

A GPU is now a commodity electronics item, which has brought huge computing power to AI research, at low cost. Recent advances in neural network computing have also benefited by access to enormous datasets, partly because of the plummeting cost of fast-access storage, but also because of Internet-scale networking.

Deep learning or discovery?

The early declarations regarding artificial intelligence envisaged machines that would learn from their experiences in the world. In fact, getting machines to take decisions the way a human would, have mostly involved humans laboriously constructing decision logics and ontologies, and structuring large amounts of knowledge (especially in the ‘expert systems’ approach).

Another approach, highlighted in her talk to NetIKX by Tamara, involves a supervised learning set-up period, using a training set of sample data, consisting of paired inputs and correct outputs.

Deep reinforcement learning is a new approach largely pioneered by British start-up DeepMind (since acquired by Google). A task is set up with minimal guidance, but the better the result obtained, the greater the ‘reward’ given to the system: the system then finds its own way of attaining the best possible score.

DeepMind started their research by running a deep learning stack on a convolutional neural network, to play old Atari games such as BreakOut. The only data to which the system had access, was the pixel display produced by the game program! More recently, their AlphaGo system gained fame beating world masters of the East Asian strategy game Go. Its 2017 successors, AlphaGo Zero and AlphaZero, were entirely self-taught, and trained by playing against themselves.

DeepMind has made recent strides into applying its techniques to the diagnosis of medical images, especially of the eyes and kidneys, and these will be considered in the section below on machine learning successes. Another biomedical field in which this approach has been proving its value is in drug discovery.

One discomfort which many people have expressed with these new largely unsupervised machine learning techniques – which one might characterise as ‘learning without teaching’ or even ‘discovery’, is that we can get to the point where the machine outperforms the human, be it in game-playing or medical diagnosis, and yet we have no idea by what ‘logic’ it made its decisions. For some, this is an ethical or at least a philosophical problem.

DeepMind Health case studies

If we are to examine some British-based case studies in the application of deep machine learning neural nets, consider DeepMind’s forays into healthcare computing. Amongst other things, they illustrate naïveté in the handling of patient data, though rapidly corrected in later work.

In 2016, DeepMind’s collaboration with the Royal Free Hospital group, ‘Streams’, came under press scrutiny. The aim of the project was to process masses of data to monitor risks amongst kidney patients. However, what the Royal Free handed over to DeepMind was fairly complete confidential records of 1.6 million patients annually, across three hospital sites, including admissions, discharge and transfer data, psychological and HIV diagnoses and abortion histories. The deal was criticised by the NHS National Data Guardian, Dame Fiona Caldicott, and by the Information Commissioner’s Office.

Later in 2016 and 2017, DeepMind entered into other partnerships with UCLH and Cancer UK’s centre at Imperial College for automated detection of tumour tissue, and with Moorfields Eye Hospital and UCL for analysing eye scans. I shall focus on the latter case as the results are very promising, there was a recent news release in July 2018, and it shows the partnership has learned about data responsibility.

Scanning the scans. Preventable eye diseases including glaucoma, macular degeneration and diabetic retinopathy affect hundreds of millions of people worldwide, and can cause debilitating blindness. For diagnosis and monitoring, opthalmologists use a variety of tests, from simple reading-card acuity checks to various kinds of medical imaging.

Until recently, the ‘state of the art’ was 2-D retinal photography. The latest thing is optical coherence technology (OCT), which has been described as like ultrasound scanning, but with light. The device returns a micrometer-resolution 3-D digital model of the retina, to a depth of more than a millimetre. (I have had a few of these scans in the last year: it is clear that the information about retinal structure in depth is far superior to the former 2-D approach).

The problem is, interpreting these scans is not easy; it takes time and the applied knowledge of top-level staff (who are not deemed qualified until after at least nine years’ training). At Moorfields, they have been accumulating scan data faster than their experts can analyse them. This risks missing opportunities for early intervention in priority cases.

In 2016, Moorfields and the UCL Department of Opthalmology sought DeepMind’s co-operation in applying deep machine learning to the problem. Avoiding the issues which had arisen in the Royal Free case, the first step was to completely anonymise the historical scan data, recording only the scans themselves and what the expert diagnosis had been in each case. This provided a large training set of one million scans for deep reinforcement learning.

Two years on, and the initial results are promising. The system can recommend the correct referral decision for over 50 eye diseases, with 94% accuracy, matching the performance of the best human experts. (https://www.moorfields.nhs.uk/content/breakthrough-ai-technology-improve-care-patients).

As DeepMind has explained, two neural networks were created, each for a particular role: the first, the ‘segmentation network’, was trained to use the light-scattering in the deep retinal images to differentiate kinds of eye tissue and generate a 3-D digital map, marking detected features such as lesions and micro-haemorrhages. The second, the ‘classification network’, has been taught to mark each case as appropriate for one of the four care pathways currently used by Moorfields. This two-level approach will make it easier to adapt the system to other kinds of scanning technology (and treatment options) in the future.

Another point made by DeepMind is that they recognised that the clinicians wanted the system to ‘explain its reasoning’ and pinpoint which parts of each scan led to the conclusions drawn.

Moorfields and DeepMind representatives explain their project in the YouTube video below.

Conclusions

In preparing this review essay, I have seen nothing to suggest that any machine reasoning can be called ‘intelligent’ without robbing the word of meaning. I recommend Alan Jones’ article on Medium, ‘Are current AIs really intelligent?’ — https://medium.com/datadriveninvestor/are-current-ais-really-intelligent-b5e74b66dbcd

A fortiori, such systems cannot display morality, though there are many ethical questions about how humans commission, design and act of the recommendations of these systems.

To summarise where we are at, in a simplistic way:

- Research into machine reasoning from the 1980s, especially the ‘expert systems’ and knowledge representation approach, has delivered benefits within our field of information and knowledge management. In particular it has encouraged greater rigour in coding information within knowledge-bases, and has strong potential to help deliver projects such as the Semantic Web.

- Neural networks have evolved in design, and have gained access to powerful and relatively inexpensive hardware. We can expect them to be used in many ‘recognition and classification’ tasks with increasing success – e.g. image and video and speech recognition, natural language processing, machine translation, medical diagnosis from imaging, and many other fields. Some are already consumer applications.

- In so far as ethical questions are involved, they pertain to the motivations and behaviours of people, not machines. It is people who ingrain biases into algorithms, people who weaponise facial recognition, people who arrange for the surveillance of populations, people who may switch on the capabilities of potentially autonomous killer robots such as the Samsung SGR-A1 guns installed across the Korean peninsula.

- A lot of money coming into this field is linked to autonomous vehicle development. The ‘ethics’ of driverless vehicles has been much written about, and I have not addressed it here.

- The field has survived two ‘AI Winters’ and is currently experiencing a heatwave. The usual suspects such as Google, Facebook, Amazon, Apple and Microsoft are all clambering to take the lead, and in this struggle we can increasingly expect scientific openness to be replaced with commercial secrecy.

I hope this brief essay will help clarify discussion about what we are talking about. Writing it has helped to clarify things for me. Thank you for reading.

— Conrad Taylor, August 2018

Some suggested reading

(This is still in the process of being compiled.)